Why is Data so Important?

As digitization in many companies has rapidly developed, we are now faced by ever growing sets of data contained in various databases, which are unstructured, unmapped, unsynchronized and non-harmonized. In fact, quintillions bytes of data are generated every day out of various sources, such as digital media, web services, business apps, machine log data, etc. The advance of the Internet of Things (IoT) additionally intensifies the pace of data acceleration. According to “The Digital Universe in 2020” study, by that year there will be around 40 trillion gigabytes of data at our disposal. However, the potential to bring value would lie in approx. one third of them.

Businesses find themselves flooded by data and unable to extract information that they provide with any of the conventional tools. Progressively, more and more entrepreneurs consider implementing data-based intelligence solutions to increase their competitiveness, but they often have no clue on how to go about it.

ML Makes Data-driven Decisions Common

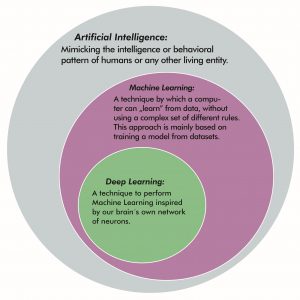

Machine Learning (ML) – the backbone of Artificial Intelligence – allows us to map, structure data, identify patterns, consumer trends and extract additional information from them, so that the subsequent decisions are made faster and more accurately. In practice it is a set of tools – ready-made algorithms, which are used to build a mathematical model based on training data. Computer systems using ML models can improve on themselves by learning from input and make decisions with minimal human assistance. It’s certainly not a new concept – most of these algorithms were developed already in the 70s – but it is the increasing computing power that fuels their current momentum.

There is a variety of software frameworks, libraries and computer programs for machine learning that help organizations process data easily, such as NoSQL databases or Hadoop MapReduce and Apache Spark – open source data-processing tools. They are used in a wide range of applications in and across sectors – from agriculture to financial market analysis, and from logistics to medical diagnosis, anatomy and DNA sequence classification.

Innovations that we provide at Inero Software are also cross-industry in their nature. We’ve been working on AI-based solutions for the logistics industry, the tourism industry, and digital healthcare, to name a few. Currently, we’re using our know-how of deep learning – an ML method based on artificial neural networks – in a medical project, in order to automatically recognize and classify certain features in images. The goal of this project is to visualize selected and specific human organs in 3D, based on computed tomography (CT) and magnetic resonance (MRI) imaging. We’re working on the solutions for liver visualization, and for this we’re using a class of deep neural networks, namely convolutional neural networks, to select the structure of a liver from the entire imaging data. The personalized visualizations – as each time a specific case of liver pathology is shown – can be used to make patients aware of what their livers look like, exhibit the location of a tumor, or to educate medical students.

More commonly, machine learning can be used to enhance decision making, e.g. by creating a recommendation system for future actions, based on historic knowledge, that will allow us to suggest what actions employees should take to maximize the effectiveness of an enterprise. This historic data can be, for example, the behavior of employees in correlation to how it has affected the company. Organizations can also define the risk of a given decision based on historic data and build a recommendation system for risk sensitivity. With its help, we can predict whether a small change in some aspect can translate into a large impact on our operational performance, profit or loss in the future. Or if decisions, which may not seem important to us, are in fact highly significant.

Solutions to Go

Artificial Intelligence and Machine Learning, once reserved for a very narrow group of clients, such as wealthy companies that had resources, are currently in a common use. There are large suppliers who offer compartmental tools, such as Google and its multi-language online handwriting recognition system, allowing mobile users to handwrite text on their phones and tablets. Or Amazon with Rekognition – a deep learning-based image recognition service that enables you to search, verify and organize masses of images. A picture is simply uploaded and information on what’s in it is sent back to the user, along with the degree of probability, providing for identification of the objects, text, scenes, and activities, as well as detection of any inappropriate content. You Only Look Once (YOLO) works on a similar premise – it is used for recognition, detection, and tracking objects in a camera image. It has a wide usage in detecting fires and other incidents, monitoring the CCTV system to measure and count people, vehicles, the availability of parking spaces at any given moment, etc. Formerly, it would have been necessary to first teach a neural network for hours, and now such features are offered as ready solutions.

Artificial Intelligence and Machine Learning, once reserved for a very narrow group of clients, such as wealthy companies that had resources, are currently in a common use. There are large suppliers who offer compartmental tools, such as Google and its multi-language online handwriting recognition system, allowing mobile users to handwrite text on their phones and tablets. Or Amazon with Rekognition – a deep learning-based image recognition service that enables you to search, verify and organize masses of images. A picture is simply uploaded and information on what’s in it is sent back to the user, along with the degree of probability, providing for identification of the objects, text, scenes, and activities, as well as detection of any inappropriate content. You Only Look Once (YOLO) works on a similar premise – it is used for recognition, detection, and tracking objects in a camera image. It has a wide usage in detecting fires and other incidents, monitoring the CCTV system to measure and count people, vehicles, the availability of parking spaces at any given moment, etc. Formerly, it would have been necessary to first teach a neural network for hours, and now such features are offered as ready solutions.

More and more such tools are being released regularly. Compartmental solutions are often user-friendly, save time and are less costly for the end customer, but by their nature are not as specialized as tailor-made software. Which actually brings out an important issue – when should dedicated software be preferred to ready products? We’ll be happy to touch upon it in the upcoming updates of our blog.

The last case we wish to bring out is Dialogflow. It is an AI mechanism available to everyone considering building intelligent chat bots. As a matter of fact, chat bots are slowly replacing humans in call centers; they can skillfully call and extract information from customers, or respond in detail to their inquiries.

I would be remiss if I didn’t evoke the issue of the Turing Test slowly losing its bearing on reality. For 50-60 years, since the 1950s onwards, the Turing Test made it possible to differentiate machines from humans. We are entering a world where well-configured bots have surpassed the once unconquerable limit and are now increasingly indistinguishable from us.

Undeniably, data today is one of the most crucial assets available to organizations, which can extensively explore its value with the help of Machine Learning-based solutions. The task for businesses and institutions remains to identify the areas in which computerization can be implemented and then use the vast quantities and complexities of data to tap into their latent potential.

Inero Software provides knowledge and expertise on how to successfully use cutting edge technologies and data to shape corporate digital products of the future.

In the blog post section you will find other articles about IT systems and more!