Over the past few years, as a team building AI solutions in enterprise environments, we have been repeatedly asked to consult on new AI automation initiatives.

These conversations rarely start with theory.

They start with very concrete ambitions.

We are asked to:

design AI-powered voice agents integrated with core policy systems,

automate underwriting or claims triage in insurance,

build internal AI copilots connected to ERP and CRM platforms,replace expensive SaaS tools with locally controlled LLM-based systems,

embed AI into compliance, IAM, or security workflows.

Technically, most of these projects are feasible. Architecturally, they can be implemented. But feasibility is not the same as strategic fit. And this is where many AI initiatives begin to diverge.

The Illusion of “Safe AI” in Corporations

In many organizations, we observe a similar decision pattern.Managers — often highly competent — tend to select AI initiatives that feel safe:

automate a specific process,

replace a known SaaS function,

launch a pilot on a non-critical workflow,

reduce headcount in a contained area.

Psychologically, this makes sense.

It is:

measurable,

limited in scope,

easy to justify,

easy to communicate to the board.

And if it fails?

It is tempting to say:

“The model wasn’t mature enough.”

“The AI technology underperformed.”

“We’ll revisit it next year.”

Responsibility shifts to the tool — not to the strategic framing. This is the paradox. What feels safe at the initiative level is often strategically fragile.

Recent market revaluations in SaaS highlighted exactly this vulnerability: workflow automation that can be described in words is the first to be commoditized.

Enterprise AI projects built on the same logic carry the same structural exposure.

Is It Acceptable to Fail?

Is failure acceptable in enterprise AI? Formally — no.

No organization plans to fail.

But avoiding structural risk prevents structural learning.

If an organization:

avoids deep integrations,

avoids architectural decisions,

avoids embedding AI into its operational backbone,

it never develops first-hand understanding of what works and what does not.

In that case, its AI strategy becomes shaped by:

vendors,

evangelists,

sales narratives,

competitor announcements.

And those are not neutral advisors:

The real risk is not failure.

The real risk is outsourcing strategic thinking.

Before Estimation: Context Comes First

This is why we rarely jump directly into estimation.Before any AI initiative enters the implementation pipeline, we ask:

How does the company truly generate value?

Where is the operational bottleneck?

Where is regulatory exposure?

Is AI intended to reduce cost — or to become an operational layer?

Does the organization have the maturity to own this solution long term?

Without this analysis, AI adoption becomes reactive rather than strategic.

And in enterprise environments, reactive initiatives rarely create durable advantage.

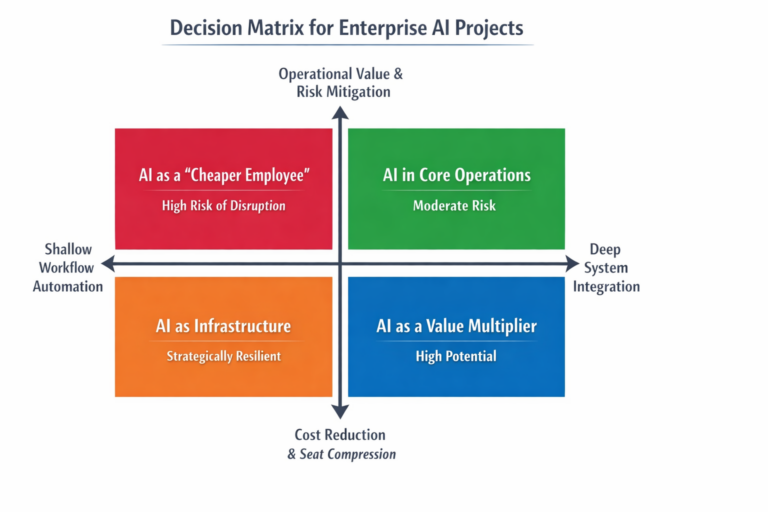

A Decision Matrix for Enterprise AI Projects

From our experience, enterprise AI initiatives can be evaluated across two dimensions:

Axis 1: Depth of Integration

Shallow workflow automation

Deep operational and system integration

Axis 2: Nature of Value Creation

Cost reduction / seat compression

Throughput increase / revenue growth / risk mitigation

This produces four archetypes.

AI as a “Cheaper Employee” (High Strategic Risk)

If a project:

automates something fully describable in a prompt,

primarily reduces headcount,

requires minimal integration,

has low switching costs,

its advantage is short-term.

This is the segment most exposed to commoditization and rapid disruption

:

It may generate short-term savings.

It rarely builds long-term advantage.

AI Embedded in Core Operations (Moderate Risk, Real Potential)

Here we enter true enterprise territory:

integration with CRM / ERP / billing systems,

governance and access control,

regulatory accountability,

operational dependency.

Large organizations are structurally conservative about replacing deeply embedded systems due to regulatory and operational risk.

AI initiatives in this layer have higher resilience — but also require architectural maturity.

AI as Infrastructure (Strategically Resilient)

The most resilient initiatives do not replace a single process.

They create a structural layer:

AI orchestration,

AI governance,

AI observability,

AI security,

enterprise AI gateways.

This mirrors what market winners do: instead of competing with AI, they enable it

This is not AI as a feature.

It is AI as infrastructure.

AI as an Operational Multiplier (Highest Long-Term Potential)

The most promising initiatives are those where:

AI increases system throughput,

improves decision quality (risk scoring, fraud detection),

enhances asset utilization,

reduces regulatory exposure.

This is not “AI instead of people.”

It is AI as a multiplier of the existing business model.

Six Answers We Seek Before

Before moving into estimation, we typically ask:

Does this project automate something fully describable in a prompt?

Is its primary value headcount reduction?

Could it be replaced by a cheaper model within 12 months?

Is it embedded in core systems?

Does it benefit from regulatory entrenchment?

Does increasing AI adoption strengthen its value — or weaken it?

If the answers point toward shallow cost optimization without structural embedding, we recommend strategic analysis before development.

Because AI implemented without business context can distort operations instead of improving them.

Final Thought

AI in enterprise is not a feature roadmap decision – it is an architectural decision. Organizations that treat AI as a tool will optimize locally -> Organizations that treat AI as infrastructure will transform structurally.

And the difference between those two approaches will define who builds durable advantage — and who builds short-lived automation.